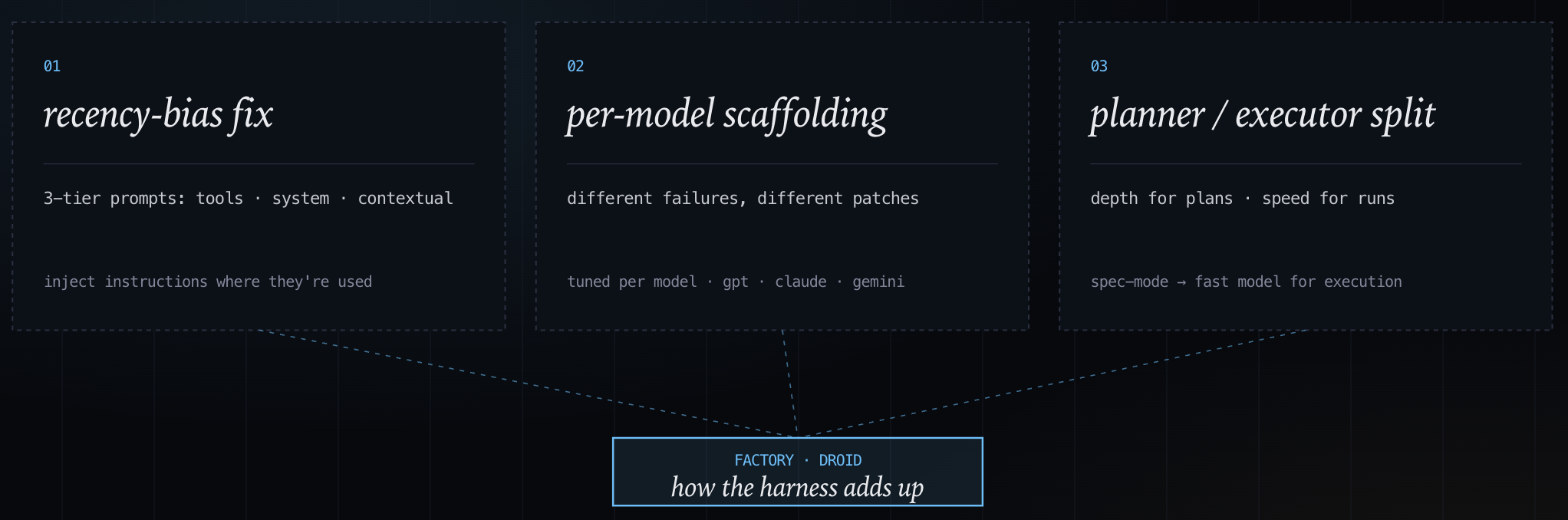

Droid injects prompt context dynamically. Most coding agents put everything in the system prompt — behavioral rules, tool descriptions, project conventions — and leave it there for the entire session. The problem with this approach is recency bias: instructions at the top of a 10,000-token context are weighted less than instructions near the current turn. Droid's prompting strategy pushes notifications at the right place at the right moment they're relevant.

The rest of the design follows the same logic: per-model schema tuning because different providers fail differently at tool calling, and a planning/execution split that routes specification work to a stronger reasoning model and leaves the faster model for tool calls and file writes.

Contextual instruction injection (recency bias)

Most agents place a large block of instructions at the start of the system prompt, followed by tool calls, and results. LLMs weight recent tokens more heavily than early ones, so instructions at the top of a 10,000-token prompt are less reliably followed than the same instructions injected right before they're needed.

Droid has a three-tier prompting structure that addresses this directly. These structure follows tool descriptions, system prompts, and then contextually-injected notifications, so information is available when needed.

Per-model tool-call schemas (scaffolding)

Different models fail at different points when calling tools. Some misinterpret nested schemas; some retry too aggressively on errors; some have specific formatting tendencies that cause cascades. Droid has per-model adaptations rather than a single universal prompt. The per-model tuning also applies to schema structure: schemas are kept flat rather than nested, with only required fields. When a schema is complex the model makes more formatting errors, and when it errors the agent either retries and burns tokens or surfaces a failure. Smaller schemas reduce the failure surface before any retry logic kicks in. This is more explicit than ForgeCode's schema-flattening approach where the harness is adapted to the model.

The benchmark numbers across models show that the same harness with different models obtains different results, e.g. 77.3% on GPT-5.3-Codex, 69.9% on Claude Opus 4.6, 64.9% on GPT-5.2, 61.1% on Gemini 3 Pro.

Mixed models for planning vs. execution

Factory frames this as a planning and execution step. A heavier model is used for decomposition and trajectory selection, then a cheaper/faster model prepares the concrete edits of the execution.

Session initialization injects system information — OS, shell, installed tools, current directory — before the first turn, so the agent doesn't burn early tool calls discovering its environment. Search uses ripgrep rather than grep for its parallel execution and .gitignore awareness. Timeout tuning is per-tool rather than a global value — a shell command forking a build process gets a longer timeout than a file read. Background process primitives handle long-running services like dev servers or test watchers without blocking the agent loop.

Guardrails by default (trade-off)

Droid has available guardrails for secret detection (Droid Shield scans output for credentials before they're written), LLM safety policies with allow/deny enforcement, and sandbox isolation. Autonomy levels (low, medium, high) control how much runs without confirmation. At high, Droid runs fully unattended — this is how Droid Exec operates in CI/CD pipelines in non-interactive mode. Pi makes a different call: keep the core small and ship guardrails as extensions. Droid's default is heavier, but safer out of the box.

One caveat with Droid: contextual injection is only as good as the harness's retrieval and compaction. If the harness drops the wrong context under pressure, the advantage of this method disappears.

The 77.3% result in Terminal Bench is relevant since it shows that Droid's approach places it in the top 10.

The full leaderboard is at tbench.ai. Droid's benchmark writeup is at factory.ai/news/terminal-bench.