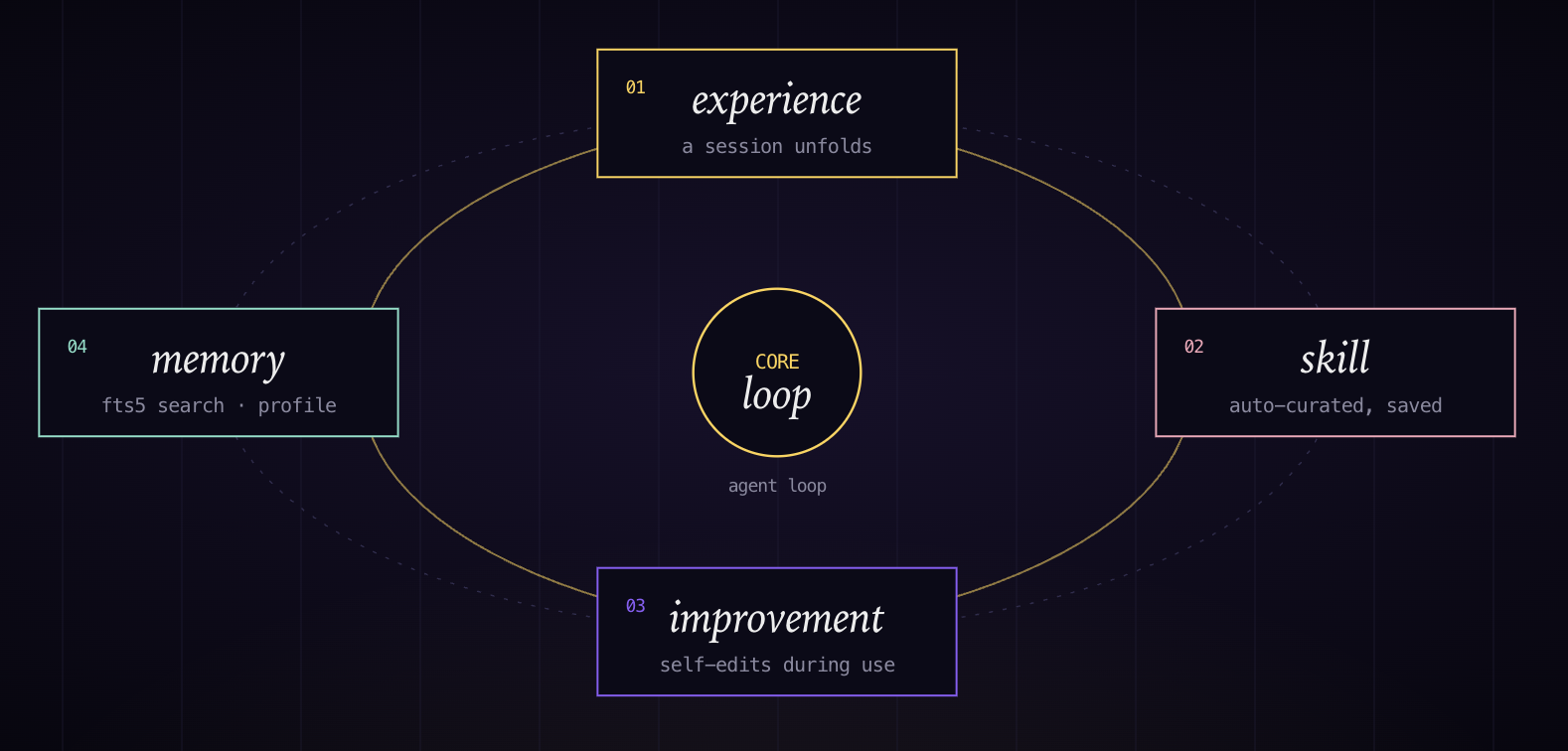

Learning Loop & Memory

After completing a complex task, the agent autonomously extracts a reusable skill from what it did. The next time a similar task comes up, that skill is available. Skills self-update as the agent uses them and finds better approaches. A separate "nudge" system periodically prompts the agent to consolidate what it's learned into long-term memory. Past conversations are indexed via SQLite FTS5 and retrievable with LLM summarization — so something you worked through six months ago can surface as relevant context today.

Personalization: Honcho

The user modeling layer is called Honcho. It uses the user sessions to build and refine a model of the user — not just command history, but preferences, communication style, and workflow patterns.

Scaling Hermes: Atropos, Backends, and Ecosystem

The most unusual component is Atropos, an optional reinforcement learning integration that turns agent sessions into training data. Real tasks generate trajectories; those trajectories get compressed and published — the maintainer regularly ships their own work sessions to Hugging Face. The agent's usage generates the data that trains better versions of itself. Nous Research uses this for improving tool-calling models.

Hermes has no benchmark result and no public results on Terminal-Bench 2.0. The repository publishes no accuracy numbers, no latency figures, no comparisons. The 125K GitHub stars (18,600 forks, v0.11.0 as of April 23, 2026) are the only external signal that the approach is popular.

The infrastructure flexibility is broader than any comparable agent: six execution backends covering local, Docker, SSH, Daytona, Singularity, and Modal, plus a built-in cron scheduler for unattended automation. It reaches 200+ models via OpenRouter, Nous Portal, and NVIDIA NIM. It also runs as a bot across Telegram, Discord, Slack, WhatsApp, Signal, and Email — which signals something beyond a pure coding agent toward a general automation assistant.

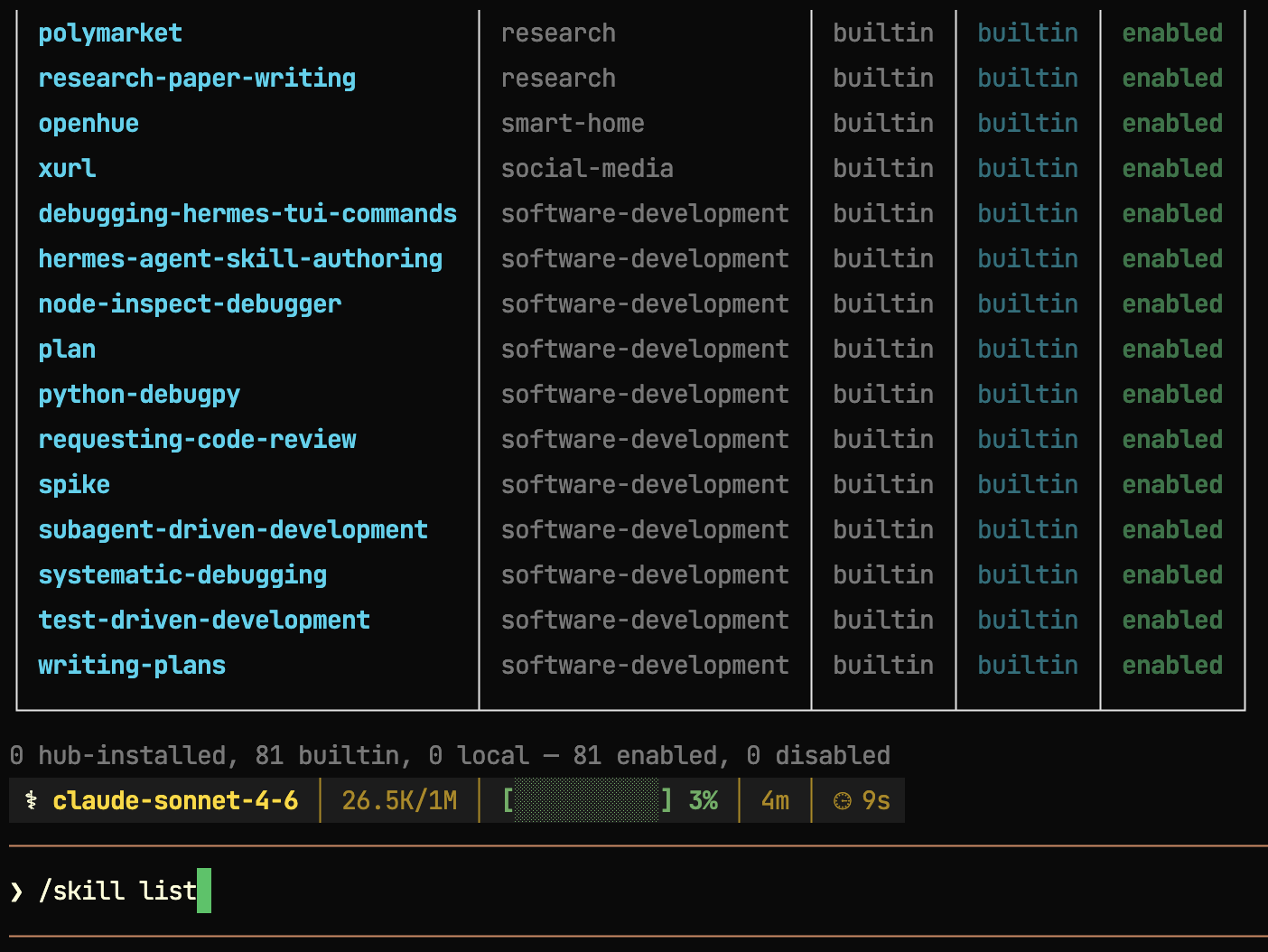

The skill ecosystem is built around an open standard at agentskills.io, where community-built capability packages are shareable and installable by name. It's a direct parallel to what package managers do for code, applied to agent capabilities.

The approach Hermes takes to learn from previous interactions has not been formally assessed to show that the learning improves user performance over time. It would need to be properly evaluated to understand whether the proposed approach works in practice.

The repo is at NousResearch/hermes-agent.

You can try Hermes at Tensorlake's harness.new site.