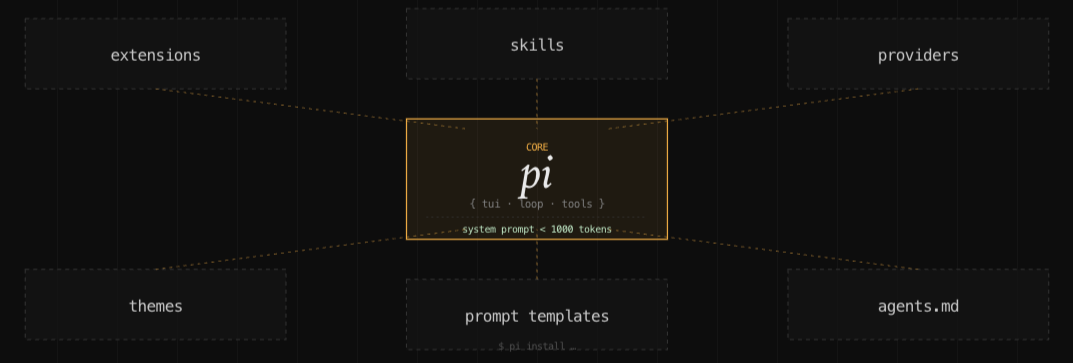

Pi's efficient system prompt

Claude Code uses ~10,000 tokens of system prompt, OpenCode ~10,000+, Cline ~7,000. Pi's sub-1K total is a ~10× difference. That leaves 10× more context window for every task. This works because frontier models don't need extensive behavioral content — they already know how to reason, use tools, and follow conventions. A minimal prompt, if effective, makes it more efficient.

The mechanism that makes this work without sacrificing capability is what Pi calls lazy skills. Every capability package keeps only its description in the context on every turn. The full instructions and tool schemas load only when the skill is explicitly invoked — via /skill:name or automatic detection. Compared to MCP's approach of preloading all tool schemas at session start, this is a significant token saving on any task using only a fraction of the tool capabilities.

Pi's agent loop is a minimal version of ReAct: stream the LLM response, return if there are no tool calls, execute if there are. Its execution strategy runs independent tools in parallel and dependent ones sequentially. If the model requests reads across ten files in a single turn, all ten fire simultaneously.

Pi deliberately does not include some features by default: doom-loop detection, sandboxing, and approval gates are all opt-in extensions. A fresh install is minimal and fast, but safety features are not on by default. Whether that's the right default depends on how much you trust the models you're running.

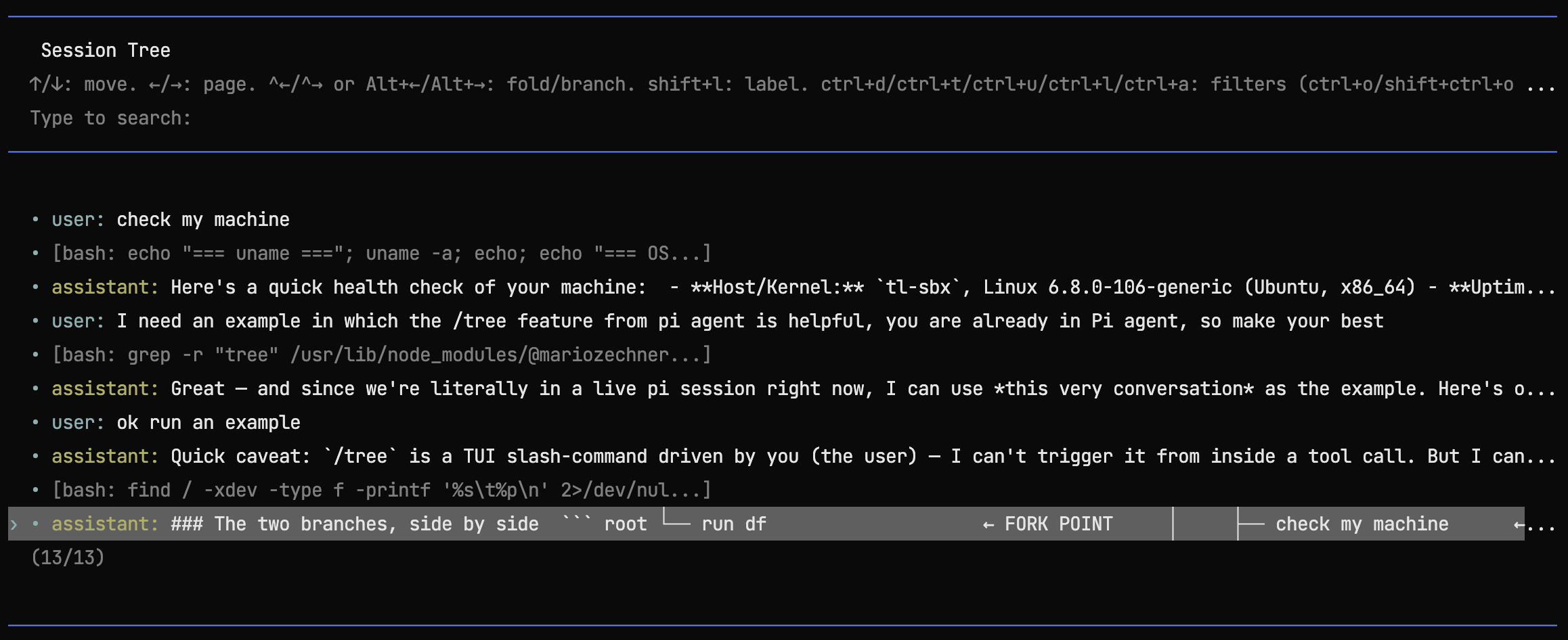

Sessions are stored as JSONL with id and parentId per entry, making the history a DAG rather than a flat list. Branching mid-conversation is a first-class operation — /fork detaches to a new file, /tree visualizes the full graph. For tasks that explore multiple approaches before committing to one, this is more useful than a linear history that forces you to either pick a path or lose the alternatives.

Context compaction feature triggers automatically when the window fills, with 16K tokens always reserved for the LLM's response — the context always has enough space. The full conversation history is always preserved in JSONL regardless of compaction. What gets summarized is the in-context representation, not the record. /compact [instructions] lets you trigger it manually with guidance on what to prioritize in the summary.

The TUI is minimalistic as well: no full-screen takeover, appends to scrollback rather than replacing it, tmux-compatible. Small decision, but it reflects the same instinct as the prompt size — don't take more than you need.

Pi has not been formally evaluated on Terminal-Bench or any published benchmark. The repo makes no performance claims. On the other hand, the maintainer publishes real work sessions to Hugging Face as training data, which at least means the agent is being used on actual tasks rather than synthetic ones.

Pi has 41.5K GitHub stars and is embedded in OpenClaw (145K stars/week via RPC). It's built by Mario Zechner and Armin Ronacher — Ronacher is the author of Flask and Jinja2, and the "do less in the framework, trust the user" instinct that shaped those projects is visible in every architectural decision here.

The repo is at badlogic/pi-mono.

Pi is readily available to be used as a harness at Tensorlake's Harness site https://www.harness.new/.